Why Your Audio Quality is Quietly Killing Your Credibility

The Invisible Barrier to Connection

We all have experienced "Zoom fatigue", that specific, draining exhaustion that sets in after a day of virtual meetings or listening to remote presentations. While we often blame the length of the meeting or the screen time, the primary culprit is often invisible to the eye but taxing to the mind: poor audio quality.

A behavioral scientist views the ear as merely a "vehicle to the brain." The problem isn't just that you are hard to hear; it is that poor audio forces the listener’s brain to find it significantly trickier to switch between stimuli. When your voice is competing with background noise or room echo, the brain must constantly attempt to isolate the speaker’s signal from the environmental noise. This struggle breaks the natural flow of connection, leading to a loss of interest and a rapid depletion of cognitive energy, regardless of how compelling your content might be.

The "Fluency" Trap: When Your Mic Makes You Look Unintelligent

A groundbreaking study by researchers Eryn Newman and Norbert Schwarz, titled "Good Sound, Good Research," highlights a startling psychological phenomenon known as "cognitive fluency", the ease or difficulty with which our brains process information.

In their research, participants were presented with identical scientific conference talks and radio interviews from NPR’s Science Friday. The researchers simply altered the acoustic quality, introducing a slight echo or a simulated bad phone line. The results were devastating: despite the content being exactly the same, listeners rated the research as "less important." Furthermore, they perceived the speakers as less intelligent, less competent, and less likable solely because of the technical quality of the recording.

Most critically, audio quality was sufficient to trigger a total preference reversal. As a specialist's warning, whoever had the better audio was considered the better researcher working on a more important project.

"Listeners are likely to attribute any difficulty they have in understanding you to the quality of your presentation and the quality of your research. Sometimes it may be better not to be recorded at all than to accept the adverse consequences of a poor recording."

For experts, this is a profound pain point. Years of rigorous work can be dismissed in seconds because the brain misreads processing difficulty, a "cognitive stumble", as a sign that something is fundamentally wrong with the content itself.

The 35% Brain Drain: The Science of Listener Fatigue

Why is poor audio so physically exhausting? Psychoacoustic research conducted by the global audio brand EPOS utilized pupillometry tracking, measuring the dilation of the pupil as a direct proxy for mental effort to find that the brain works 35% harder to interpret information when audio is compromised.

This increased load is caused by "temporal smearing." When sound reflects off hard surfaces, the decaying tail of previous syllables overlaps with subsequent ones, masking the transient details of speech (consonants like 't', 'k', or 'p'). The listener's brain must then work 35% harder to "de-smear" the speech and find the transients required to decode the linguistic content.

By utilizing high-quality audio or EPOS BrainAdapt™ technologies, you can mitigate this "brain fatigue" and unlock measurable benefits:

Better Memory Recall: Subjects’ memory recall improved by 10% when listening to clear audio.

Higher Word Recognition: Improved clarity allows the brain to accurately interpret speech without constant "best-guess" gap-filling.

Lower Stress Levels: Reducing the effort required to listen lowers the physiological stress associated with filtering out unwanted stimuli.

The Gear Paradox: Why Your $3,000 Microphone Might Be Making You Sound Worse

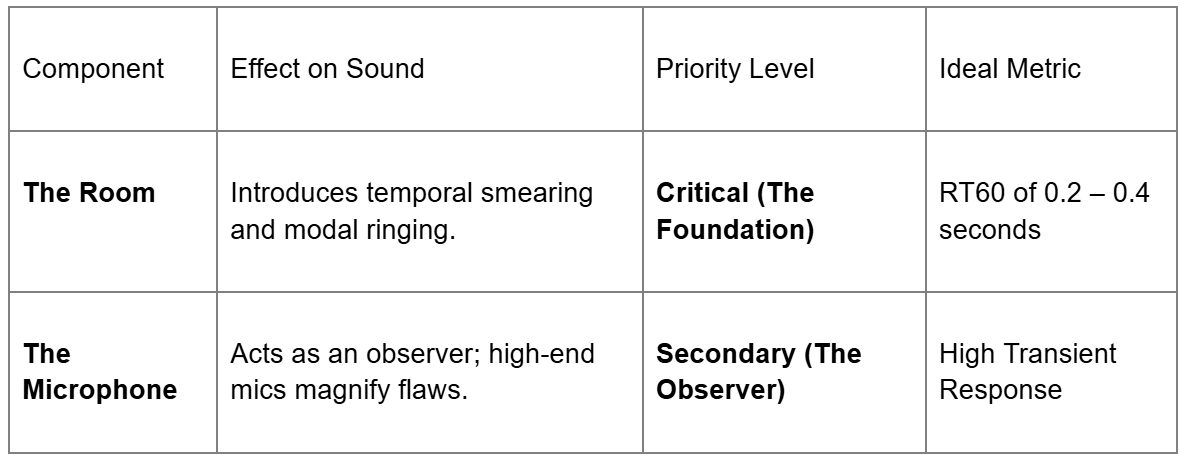

In audio engineering, we follow the "Weakest Link" theory: the signal chain is strictly limited by the component with the lowest fidelity. In most home offices, the weakest link isn't the microphone; it is the room’s RT60 (Reverberation Time).

High-end Large Diaphragm Condenser (LDC) microphones, like a $3,000 Neumann U87, are designed for extreme sensitivity and rapid transient response. They utilize a low-mass diaphragm to capture every subtle detail. However, in an untreated room, "detail" includes the reality of your space: computer fans, HVAC hum, and off-axis coloration from flutter echoes. The failure is not the microphone’s; it is the user’s failure to provide a reality worth capturing.

Conversely, dynamic microphones (like the Shure SM7B) act as mechanical filters. Their higher-mass diaphragms have more inertia, naturally "ignoring" low-energy background reflections and focusing on the high-energy source directly in front of them.

Acoustic Reality Check

High Stakes: From Virtual Courts to the "Trust Halo"

The impact of audio quality extends into the highest-stakes environments imaginable. In the study "Sound and Credibility in the Virtual Court," researchers examined how technological glitches influence legal decision-making.

The data showed that when witnesses presented evidence via low-quality audio, they were consistently rated as less credible, reliable, and trustworthy. Most alarmingly, this difficulty in processing led to a shift in "evidence weighting." Even when the content was remembered correctly, jurors gave significantly lower weighting to evidence delivered via poor audio in their final guilt judgments (a statistically significant effect size of η2p = .05).

"These results show that audio quality influences perceptions of witnesses and their evidence... audio quality warrants consideration in trial proceedings."

The Future of Human-Sounding AI: Crossing the Uncanny Valley

The vital importance of natural sound is perhaps best proven by the future of AI. Google’s NotebookLM "Audio Overviews" has managed to cross the "uncanny valley"—the point where AI becomes convincingly human by intentionally adding "magic ingredients" that traditional audio engineering once tried to eliminate.

The key to listenability isn't technical perfection; it is the inclusion of disfluencies (the "ums," "ahs," and natural pauses). Humans use these cues as social signifiers of authenticity. By applying advanced text-to-speech modeling to introduce natural emphasis and pacing, AI developers are focusing on the "negative space" between hosts. This serves as the "social glue" that technical perfection lacks, reinforcing that human engagement requires sound that feels organic and effortless to process.

The Verdict: A Forward-Looking Summary

In our digital-first economy, audio quality is a necessity, not a luxury. Better audio translates directly into higher brand credibility, increased listener retention, and a significantly lower cognitive load for your audience.

When you invest in your sound, you are essentially paying the "35% tax" on your audience's behalf. You are removing the barriers to their understanding and allowing your expertise to shine without the cognitive stumble of technical interference.

If your voice is the only thing your audience has to go on, are you making them work too hard to believe you?

Ready to grow your business through podcasting? Let’s create a launch your podcast together. Get in touch!